For a long time now, PostgreSQL has had an extensible Index Access Method API (called AM), which has stood the test of time and enabled numerous robust extensions to provide their own index types. For example: rum, pgvector, bloom, zombodb and others. PostgreSQL 12 introduced the Table AM API, promising equivalent flexibility for table access methods.

Despite PostgreSQL's Table AM API being available since version 12 and ongoing criticisms of its built-in storage engine — particularly the MVCC model ([1], [2], [3]) — it remains surprising that no fully featured transactional storage engine has yet emerged purely as an extension.

Since the table AM and index AM APIs are tightly coupled, this is an issue for both implementations.

The features most in demand for alternative PostgreSQL table engines are:

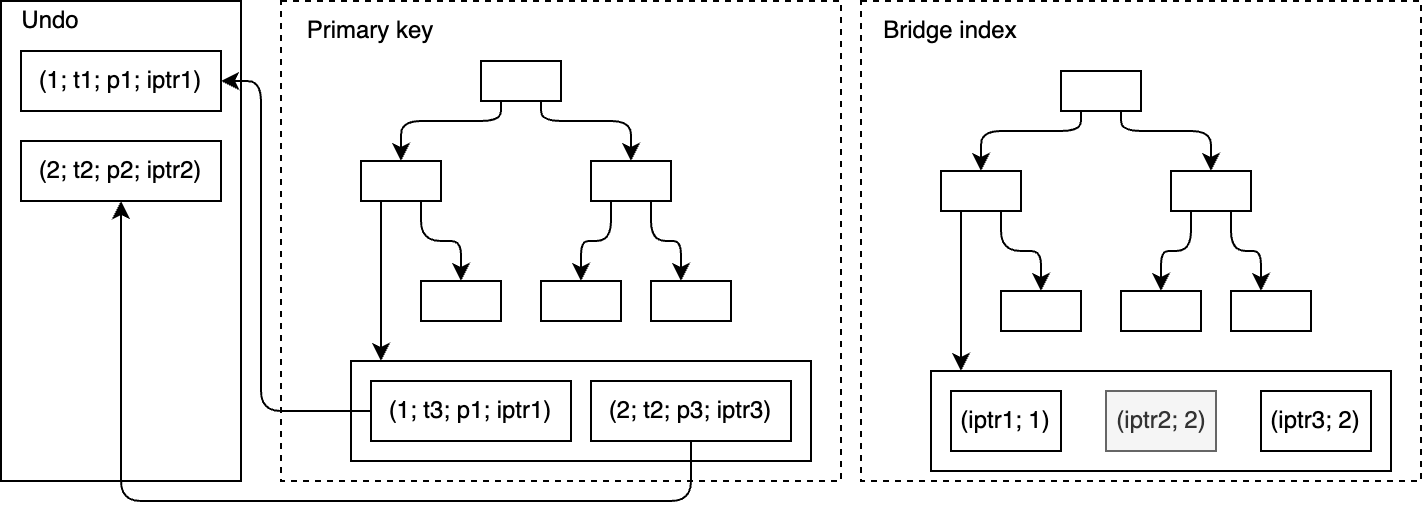

- Alternative MVCC implementations, e.g.., UNDO-log-based storages. The motivation to provide this is well-discussed in Uber blog post, Andy Pavlo blog post, and many other sources.

- Non-heap-like storages. For example, in index-organized tables, the index is not an optional addition to the table that speeds up requests but a necessary layer the table storage uses internally. Consequently, the table tuple is a part of a complex data structure and can’t be addressed by a fixed-length address like page number and offset number. It requires to be addressed by variable length identifier like index key.

The API extension to provide #2 is more or less understandable. This could be done by replacing ctid with an arbitrary sequence of bytes in all the APIs. However, #1 seems rather complex and requires much clarification.

As an example that illustrates the motivation for the changes to the table and index AM API comes OrioleDB. It is an extension providing a table access method we developed to address many well-known shortcomings of the built-in storage engine. However, OrioleDB is not yet a drop-in extension; it requires several patches to the PostgreSQL Core.

Besides these two things, which will be discussed below, there are numerous needs for further API improvements, like pointer squizzling and alternative WAL-logging, that are outside the scope of this post.